Why bpmn-to-code

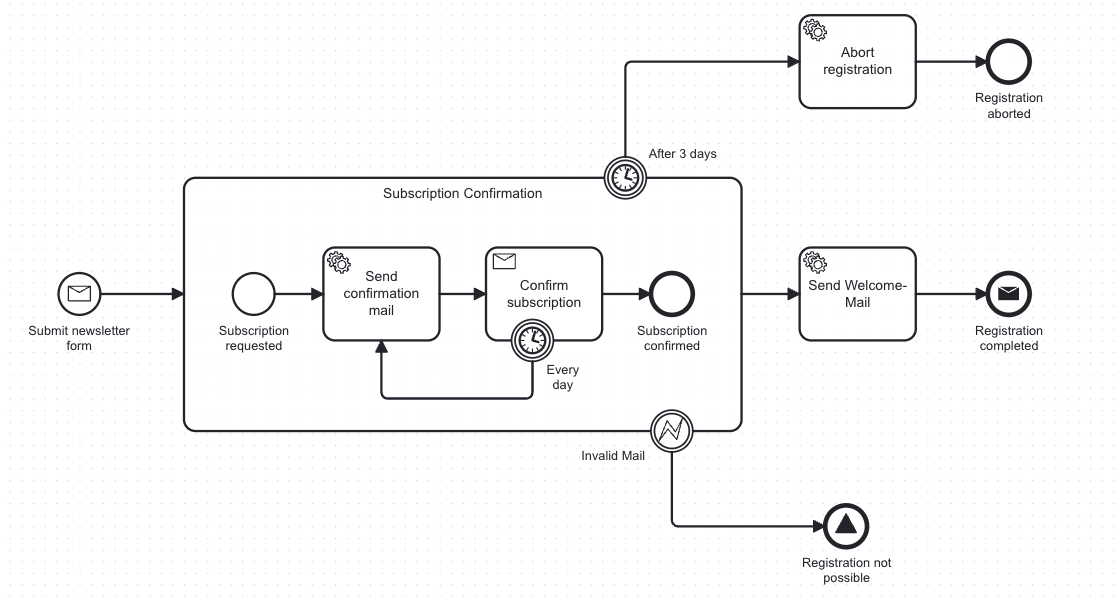

You have just modeled a process. Tasks, messages, signals, timers — laid out in Camunda Modeler. Now you need to implement it. You open your IDE and start wiring the code to the process model.

This is what that looks like:

// Scattered across your codebase

client.newWorker()

.jobType("Activity_ConfirmRegistration") // copy-pasted from the modeler

.handler { ... }

.open()

correlationClient.newPublishMessageCommand()

.messageName("Message_SubscriptionConfirmed") // hope nobody renames this

.correlationKey(subscriptionId)

.send()You copy these strings from the modeler. If someone renames a task or refactors a message name, nothing breaks at compile time. You find out at runtime — when a worker stops picking up jobs or a message correlation silently fails.

Three Pillars

Generate — Type-Safe APIs from BPMN

bpmn-to-code reads your BPMN files and generates a typed Process API from them. Every element ID, message name, and signal reference becomes a constant — derived directly from the XML, on every build.

For a newsletter subscription process like the one above, bpmn-to-code generates:

// Generated by bpmn-to-code from the BPMN model

object NewsletterSubscriptionProcessApi {

const val PROCESS_ID = "newsletterSubscription"

object Elements {

const val ACTIVITY_CONFIRM_REGISTRATION = "Activity_ConfirmRegistration"

const val ACTIVITY_SEND_WELCOME_MAIL = "Activity_SendWelcomeMail"

}

object Messages {

const val MESSAGE_SUBSCRIPTION_CONFIRMED = "Message_SubscriptionConfirmed"

}

}The hardcoded strings from before become typed constants:

client.newWorker()

.jobType(NewsletterSubscriptionProcessApi.Elements.ACTIVITY_CONFIRM_REGISTRATION)

.handler { ... }

.open()Typos become compiler errors. Renamed elements break the build before they break production.

Validate — Architecture Rules for BPMN

Just as ArchUnit lets you write architecture tests for Java code, bpmn-to-code-testing lets you write architecture tests for your BPMN models. Catch missing service task implementations, undefined timers, and naming violations — at build time, in CI, before deployment.

BpmnValidator

.fromClasspath("bpmn/")

.engine(ProcessEngine.ZEEBE)

.validate()

.assertNoViolations()The standalone validateBpmnModels Gradle task (and validate-bpmn Maven goal) runs the same checks as part of your build — no test code needed.

Surface — Process Structure in Code

bpmn-to-code generates a structured JSON file alongside the Kotlin/Java API. It contains every flow node with its display name, element type, sequence flows, and variables — sorted in process-flow order (DFS from start events).

{

"processId": "newsletterSubscription",

"flowNodes": [

{ "id": "StartEvent_SubmitRegistrationForm", "displayName": "Submit newsletter form", "elementType": "START_EVENT" },

{ "id": "Activity_SendConfirmationMail", "displayName": "Send confirmation mail", "elementType": "SERVICE_TASK" }

]

}This makes your process structure readable to:

- AI agents — paste or stream the JSON into your coding assistant's context; it understands your process without parsing raw BPMN XML

- Code reviewers — read what changed in a process model directly in a pull request diff, without opening Camunda Modeler

- CI pipelines — validate or query the process structure programmatically

The determinism guarantee matters here: the JSON is produced by fixed rules, not generated by an LLM. AI agents get reliable, structured process context — no hallucinated element IDs.

Does AI Make This Obsolete?

My name is Marco. I built bpmn-to-code to solve exactly this. If you're interested in the whole story, you can find it here. Lately, I have been asking myself whether it was the right call — or whether AI has already made it obsolete.

AI coding assistants are getting better every month. They read XML, understand process structures, and generate code. You can paste a BPMN file into Claude, describe what you want, and get typed constants back.

You could even build an AI Skill that replaces the plugin entirely — scan the project for BPMN files, generate all the constants, write the output to disk. No build plugin, no configuration, no dependency.

And honestly? I can't fully deny that. So I figured I'd make it official. I pointed bpmn-to-code at Death by Clawd — a tool that (even if not dead-serious 😄) scores how easily AI can replace a software product:

And the verdict was clear: 91 out of 100. Already dead. Cause of death — Prompt engineering presented as a product.

My defense? None. Guilty as charged — unless you ever need to do this more than once.

Why It Still Makes Sense

Every time your BPMN model changes, you rebuild — and the Process API updates automatically. Your IDE knows every element by name. Rename a task in the modeler, and the compiler tells you exactly what broke. No string search, no grepping through the codebase, no silent failures at runtime.

And when generation is wired into your build, it runs the same way everywhere — on every machine, every branch, every CI pipeline. That shifts the question from "can AI produce this output?" to "can I trust this output unconditionally, on every run, with no external calls?"

This is where bpmn-to-code shines:

- Deterministic output — Same BPMN in, same code out. Every run, every machine, every time. An LLM can vary across model versions, reorder fields, or introduce formatting drift. Diffs stay clean only when output is byte-identical.

- No hallucination risk — The plugin maps XML to code via deterministic rules. It cannot invent an element ID or silently drop a service task. Build pipelines need guarantees, not probabilities.

- No external dependencies — No network calls, no API keys, no rate limits, no model deprecations. Works offline. Never flakes because a provider had an outage.

| Concern | Build toolkit | AI Skill / LLM |

|---|---|---|

| Output stability | Byte-identical | May vary across model versions |

| CI/CD | Offline, no API dependency | Requires network + API key |

| Hallucination risk | None | Possible invented IDs or drift |

The Surface pillar (JSON export) is where these two worlds meet: bpmn-to-code produces the reliable, deterministic process context that makes AI assistance trustworthy. Use the toolkit for generation and validation; use AI to work with the output.

What I Suggest

Use the toolkit for deterministic generation and validation. Use AI for everything around it:

- Setting up the plugin in a new project — detecting BPMN files, configuring the build, choosing the right engine.

- Migrating existing codebases from hardcoded strings to the generated type-safe API.

- Scaffolding worker implementations, REST controllers, and test boilerplate from the generated Process API.

- Understanding a process — feed the JSON export to your coding assistant and ask questions about it.

Thus, bpmn-to-code ships with AI Skills that automate these workflows and an MCP Server for AI-assisted generation inside your editor. Both are optional — the build plugins work standalone.

How to Get Started

The generation engine is the same everywhere. Pick the format that fits your workflow.

| Format | Best for | |

|---|---|---|

| Gradle plugin | JVM projects using Gradle | Setup guide |

| Maven plugin | JVM projects using Maven | Setup guide |

| Web app | Trying it out, one-off generation, non-JVM projects | Web app |

| MCP server | AI-assisted generation inside your editor | MCP Server |